Did a recent study show most children are moral realists?

1.0 Introduction

An article has recently been making the rounds that people are interpreting as evidence that children endorse moral realism. The article in question is “Children deny that God could change morality,” by Madeline Reinecke and Larisa Heiphetz Solomon. Since I doubt the virality of the article will persist, I felt it worth addressing it sooner rather than later, so my thoughts will be a bit more preliminary than they typically are. Nevertheless, my thesis is simple:

This article does not present strong evidence that children are moral realists.

My goal here will be to offer a brief explanation as to why. However, before proceeding, it’s worth noting that I was a research assistant in Dr. Heiphetz Solomon’s lab a little over a decade ago. I did data collection on studies related to religious belief but was not involved in research design or analysis. The experience was illuminating and I have a lot of respect for Dr. Heiphetz Solomon’s work, including this study. I think this type of research is fascinating and provides important insights into how children think about morality.

However, that does not mean that I think this study, or any others, provide reliable indicators that children endorse moral realism or antirealism in particular. Findings can yield a wealth of insights without necessarily allowing us to address specific empirical questions with any significant measure of confidence.

2.0 The study

The study purports to show that children aged 4 to 9 tend to believe that God cannot change certain widely endorsed moral values. Children were presented with six scenarios. Each scenario involved a story in which two characters disagreed about a “widely shared” moral issue (I’ll call these “uncontroversial”), controversial moral issue, or physical fact. Here are examples of the wording used for each, drawn from the text:

Disagreement about uncontroversial moral issue

“This person thinks that it is okay to stomp on someone’s foot really hard. This person thinks that it is not okay to stomp on someone’s foot really hard. Which person do you agree with more?”Disagreement about controversial moral issue

“This person thinks that it is okay to steal food to feed someone who is hungry. This person thinks that it is not okay to steal food to feed someone who is hungry. Which person do you agree with more?”Disagreement about physical issue

“This person thinks that germs are smaller than people’s houses. This person thinks that germs are bigger than people’s houses. Which person do you agree with more?”

Children were asked which person they agreed with and whether God could change the truth in question (they were also asked how certain they were of each of these judgments). The question about whether God could change the truth in question was worded like this:

“Do you think that God could make it not okay to stomp on someone’s foot really hard?”

Yes/No

Most children said “No” to questions like this.

3.0 Evidence of objectivism?

The authors do interpret their findings to support the notion that children are intuitive realists (or “objectivists”):

This result indicates that children may perceive widely shared moral beliefs as objective in multiple ways: Not only do they report that only one person could be right in a disagreement, which reflects a common conceptualization of moral objectivity (e.g., Goodwin & Darley, 2008; Sarkissian et al., 2011; Wainryb et al., 2004), but they also reject that even an ostensibly all-powerful being could change these moral norms. We take this finding as evidence that children’s objectivism regarding widely shared moral claims emerges early and may even persist into adulthood (Heiphetz & Young, 2017; Reinecke & Horne, 2018).

I believe these conclusions are premature and unwarranted. First, the Goodwin and Darley, Sarkissian et al., and Wainryb et al. studies all rely on the disagreement paradigm. My colleague David Moss and I offer a preliminary critique of this method here, and others have likewise catalogued extensive methodological shortcomings with this paradigm, which you can read about here and here (Pölzler, 2018; Pölzler & Wright, 2020). However, the most comprehensive case against the validity of the disagreement paradigm appears in my dissertation, where I dedicate an entire chapter to arguing that it is not a valid instrument for measuring realism/antirealism, a claim I likewise support with empirical data. First, it’s worth noting that these data at best tend to show highly variable rates of realism/antirealism.

3.1 Data to the contrary

For instance, consider how Sarkissian et al. (2011) describe the results of their studies:

The present studies offer a complex picture of people’s intuitions about whether morality is objective or relative. People do have apparently objectivist intuitions in certain cases, but our results suggest that one cannot accurately capture their views in a simple claim like: ‘People are committed to moral objectivism’. On the contrary, people’s intuitions take a strikingly relativist turn when they are encouraged to consider individuals from radically different cultures or ways of life. (p. 500)

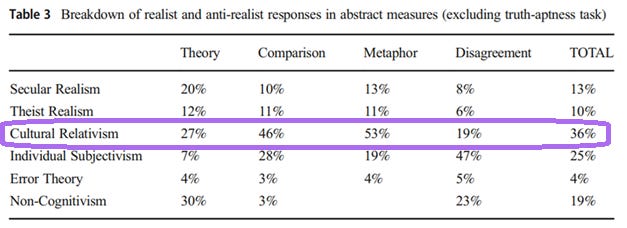

Does this look like the straightforward conclusion that people are moral objectivists/realists? No. While the authors believe people have a “fixed commitment” to realism, this “fixed” commitment seems rather malleable, shifting in response to the slightest salience of disagreement occurring between people of different cultures. As I and others have noted, claiming that a disagreement between two people within a culture has a single correct answer is clearly consistent with one of the most common forms of moral antirealism: cultural relativism, where moral facts are made true by the stances of different cultures. One shortcoming of the disagreement paradigm is that it does not present cultural relativism as a distinct response option, so there is no way for participants to specifically select this position. What happens when they are given the opportunity to do so? See for yourself:

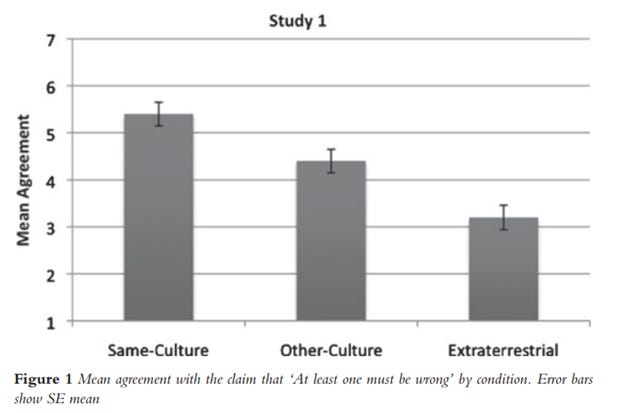

Cultural relativism was the most common (or “modal”) response for four of these five measures and had a strong showing for the fifth. Sarkissian et al. presented participants with disagreements between members of their own culture, another culture, and an alien civilization. This table shows the mean level of agreement that, with respect to a moral disagreement, at least one person has to be wrong:

Note that 7 would indicate strong agreement, or a higher “realist response,” while 1 would indicate strong disagreement, or a higher “relativist” response (setting aside, for a moment, that this is a false dichotomy since relativism and realism are consistent). So what we have is that, within the same culture the “realist” rate is a bit above the midpoint, while it is only marginally above the midpoint for the other culture condition and below the midpoint for the alien civilization. If the vast majority of people were committed to moral realism, all three of these means should be around 6-7. None of them are. And we have good reason to believe that the realist response rate for the same culture condition is probably an overestimate for two reasons. The first is the reason I already provided: when two people from the same culture disagree, both moral realists and cultural relativists would judge that at least one of those people is wrong. To make it perfectly clear why this is the case, consider what cultural relativism holds: moral truths are determined by, and are relative to, each culture. So if two people are members of the same culture, there is only one standard of moral truth relative to that culture, e.g., abortion is either morally permissible or impermissible according to that culture’s standards. So if two members of that culture are arguing, one for abortion, and one against it, one of those people is going to be mistaken.

A second reason the realist response rate is almost certainly exaggerated is that the authors used very extreme and clearly objectionable moral violations. Here they are, verbatim:

Horace finds his youngest child extremely unattractive and therefore kills him.

Dylan buys an expensive new knife and tests its sharpness by randomly stabbing a passerby on the street.

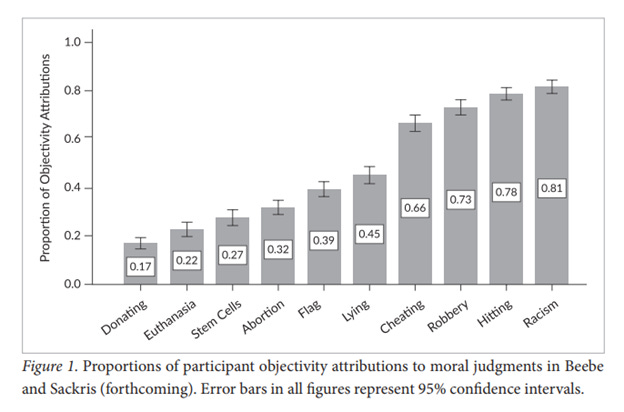

These actions would be considered extremely immoral by virtually all participants. Yet previous studies show that high consensus moral issues like this tend to yield much higher rates of realist responses, while more controversial moral issues tend to have much lower rates of realist responses, often below the midpoint, indicating that a majority of people gave an antirealist response. See, for instance, this table from Beebe (2015), which shows the proportion of people who chose the realist response option in Beebe & Sackris (2016):

As you can see, the proportion of people who choose the realist response option varies wildly across individual moral issues, ranging from as low as 17% to as high as 81%. Now, if you think murdering your own children or wantonly stabbing people with a knife on the street is about as bad, or worse, than being racist, then offer your own prediction: would we predict that the realist response rate if such items included in this study would be closer to the 81% for racism, or the 17% for donating to charity?

3.2 The stimulus-as-fixed-effect fallacy

What these findings exhibit is a common problem when researchers use specific, concrete items in their measures: those items are presumed to be representative of the set of stimuli from which they are drawn, which is known as the stimulus-as-fixed-effect fallacy (see Westfall, Nichols, & Yarkoni, 2017). Most readers will recognize that in order to have sample data that can generalize to a population of interest, the sample of participants must represent that population. In other words, the sorts of people used in your sample must be drawn from the population in a quasi-random way that ensures that they tend to be similar to that population overall. For instance, if I wanted to find out how wealthy people from a given nation are, I’d need to survey people who reflect the population as a whole. The best way to achieve this in practice is to attempt to approximate a random sampling. If one instead simply drove through wealthy neighborhoods and surveyed people who lived there, they’d obtain a distorted picture of the average wealth of people in that nation.

However, what most people, including most researchers, fail to appreciate is that this same principle applies to the stimuli used in a study. Not only must your sample approximate randomness, so too must your stimuli when that stimulus is intended to reflect members of a larger category, or “population.” In the context of studies in metaethics, what this means is that the specific moral issues you choose, e.g., abortion, stealing, lying, and so on, are drawn from the “population” of moral issues as a whole. Researchers typically make relatively unprincipled, ad hoc decisions about which items to choose. Even when they put some thought into which stimuli to use, they often do so based on a priori armchair supposition: they may choose two items they think are “extreme” and two they think are relatively milder, or they might choose items they feel crosscut the sorts of moral issues most people would paradigmatically think of. But they rarely put any effort into ensuring that:

These items are prototypical moral issues by the standards of the population they are sampling

That the participants themselves share the same normative stance towards the moral issues in question (e.g., researchers tend to be politically liberal and more secular; study participants are more varied in their political and religious perspectives)

Participants exhibit a shared metanormative evaluation of the moral issues in question (that is, they agree with one another about the non-normative properties of the items in question, such as how severe they are)

Most importantly, that the moral issues in question are randomly drawn from and therefore adequately representative of moral issues as a whole (the abstract “population of moral issues”)

They use enough different moral issues. Instead, it’s typically the case that so few moral issues are chosen that a given study will only provide a distorted, unrepresentative cross-section, typically chosen in an unprincipled way, of the moral domain as a whole

This stimulus-as-fixed-effect fallacy has sweeping and pervasive implications in psychological research. See how it is described in fMRI research:

Most functional magnetic resonance imaging (fMRI) experiments record the brain’s responses to samples of stimulus materials (e.g., faces or words). Yet the statistical modeling approaches used in fMRI research universally fail to model stimulus variability in a manner that affords population generalization, meaning that researchers’ conclusions technically apply only to the precise stimuli used in each study, and cannot be generalized to new stimuli. A direct consequence of this stimulus-as-fixed-effect fallacy is that the majority of published fMRI studies have likely overstated the strength of the statistical evidence they report. (Westfall, Nichols, & Yarkoni, 2017)

This article, along with others, highlights how falling victim to this error can lead to overstated results. The same conclusion is applicable to Sarkissian et al. (2011). Not only do they use a nonrepresentative “sample” of moral issues, they only use a pair of moral issues. When you consider these moral issues, it’s worth noting that they are not prototypical at all: murdering your own children because they are ugly and stabbing random people to test how sharp a knife both involve bizarre, psychopathic behaviors that are utterly unlike standard, everyday moral transgressions. They are so over the top that I found them amusing and predict others would, too, especially when this is coupled with other stimuli used by Sarkissian et al., such as the description of aliens called “Pentars” whose only goal is to convert all matter into equilateral pentagons. They are thus both extremely weird and extremely immoral. As we’ve already seen, severe moral transgressions have already been shown to prompt much higher realist response rates. Previous research has shown that the use of bizarre or humorous stimuli can reduce the psychological realism of stimuli, as well, which can further distort response rates and undermine the reliability of results (see Bauman et al., 2014).

3.3 Misleading interpretations of existing data

Given all of these considerations, we can confidently make a few observations. First, Sarkissian et al. did not find strong evidence that most people are inclined towards moral realism. They found equivocal results that suggest people’s commitment to moral realism is tenuous at best, given how easily it can shift towards people agreeing with the antirealist response. Furthermore, the high rate of realism observed in their first condition includes a significant confound: cultural relativists should choose the same response option as realists.

And, as I’ve shown, participants frequently choose cultural relativism as a position when it is offered. As such, that such participants would be prominent within a sample is not speculative, but a confirmed empirical reality. This confound could easily have inflated “realist” responses, since a substantial portion of the antirealist responses would be lumped in with the realists.

Finally, the specific moral violations used in the study are not representative of moral violations in general, and instead anchor the extreme end of the distribution that tends to prompt realist responses. As Beebe’s findings show, realist response rates are highly variable across moral issues, so using items that anchor one extreme end of the distribution again likely massively exaggerated the realist response rate relative to what we would obtain were we to use different moral issues. For instance, if we’d used donating to charity, the realist response rate for the same-culture condition would likely approach zero, and we’d run into floor effects for the remaining two conditions where the proportion of people who favored the realist response rate would potentially be so low it’d be hard to estimate what the actual proportion was. This illustrates that which items you use matter as much as which people you sample from a population, a consideration that is often overlooked by researchers.

Now, why did I go into so much detail about this one study? I did so because academic articles will often cite articles which purport to support the author’s claims. However, careful examination of those articles often reveals that those articles do not, in fact, support the author’s claims. In fact, the findings in these studies often don’t support the conclusions of the very authors of the study in question. Researchers often describe their findings in misleading ways, or in ways that can easily be misunderstood or distorted by others.

One irony in focusing on Sarkissian et al. (2011) is that of the three studies cited by R&S (the other two being Goodwin and Darley, 2008, and Wainryb et al., 2004), this one has fewer methodological problems than either of those studies. The Goodwin and Darley study uses a combined measure of realism/antirealism that includes a measure that isn’t even face valid, while their other primary measure suffers so many methodological problems the results of their study are essentially inconclusive. Most importantly, even in that study they had very high levels of antirealist response rates for several moral issues; it’s just that they averaged across those issues, and found an on-average relatively high realist response rate. If you presented 8 random fruits to someone, and they told you they liked 5 of those fruits and hated 3 of them, would you conclude the person “loves fruit”? No; a more appropriate response is that they exhibit a mixed response. Goodwin and Darley do make comments to this effect in the paper:

The first major finding was that individuals were not particularly consistent in their meta-ethical positions about various ethical beliefs, and were instead highly influenced by the content of the beliefs in question. This finding suggests that unlike the meta-ethical systems of philosophers, which tend to be uniform in their treatment of a range of ethical beliefs, ordinary individuals’ meta-ethical systems are highly nuanced. (p. 1358)

But then they go on to say:

The second major finding was that ethical beliefs were treated almost as objectively as scientific or factual beliefs, and decidedly more objectively than social conventions or tastes. (p. 1359)

Both statements are true; the first emphasizes variability, while the latter emphasizes comparative averages across moral and nonmoral domains. Unfortunately, people who have drawn on these findings to support the oversimplified and inaccurate narrative that the findings suggest ordinary people are moral realists tend only to quote the latter remark (or remarks like it) to support that narrative. In doing so, they overlook the actual content of the article and the significant qualifications one must put on such conclusions. And again, this is entirely setting aside that the measures used in this study are not even valid in the first place.

I’m also not the only person to notice the way the results of Goodwin and Darley’s (2008) paper has been framed. Pölzler (2017) dedicated an entire paper to critiquing the tendency to frame early experimental metaethics studies as evidence of folk moral realism:

According to one of the most prominent arguments in favour of this view, ordinary people experience morality as realist-seeming, and we have therefore prima facie reason to believe that realism is true. Some proponents of this argument have claimed that the hypothesis that ordinary people experience morality as realist-seeming is supported by psychological research on folk metaethics. While most recent research has been thought to contradict this claim, four prominent earlier studies (by Goodwin and Darley, Wainryb et al., Nichols, and Nichols and Folds-Bennett) indeed seem to suggest a tendency towards realism. My aim in this paper is to provide a detailed internal critique of these four studies. I argue that, once interpreted properly, all of them turn out in line with recent research. (p. 455)

Note that Wainryb et al. (2004), the other study R&S cite, is included in this critique. As for the problems with Goodwin and Darley’s study, there are too many to present all of them here, so I will highlight just one. Goodwin and Darley collected open response data asking participants to explain why they thought the person they were told held a contrary moral position disagreed with them. For this method to be a valid measure of metaethical views, participants must attribute the source of disagreement to a difference in moral beliefs, standards, or values, rather than e.g., the other person misunderstanding the question or thinking of a specific context where the action in question would be permissible. While Goodwin and Darley reported that almost everyone interpreted the disagreement as intended (~93%), I requested the raw data of the responses these participants provided and recoded them myself, along with my colleague David Moss in Bush & Moss (2020). We report in that article that we found only 41% of participants interpreted the source of disagreement as intended, while 44% interpreted the source in some identifiably unintended way, such as attributing the disagreement to the other person imagining a situation where an otherwise immoral action would be justified. For instance, when asked about whether it would be acceptable to rob a bank, one person explained why another person may have disagreed with them by suggesting:

This person probably has specific details of such a happening where there were extreme circumstances that lead him/her to believe robbing a bank was not morally bad.

These kinds of responses reveal that the participant may have judged that the source of disagreement was due to the other person conceiving of a different scenario than the participant. If this factored into their judgment, then their response to the disagreement question would no longer be diagnostic of their metaethical stance. Wainryb et al. (2004) likewise asked the children in their study to explain their answers. As the authors report, children’s nonrelativistic judgments regarding moral issues “referred exclusively to moral criteria (fairness) to justify why moral beliefs are nonrelative […]” (p. 693). Yet from a metaethical perspective, this makes little sense. Whether moral truth is relative or not doesn’t depend on first-order moral truths; it depends on second-order moral considerations about e.g., the semantics of moral discourse and the metaphysics of moral truth. The pattern of responses children provided strongly suggests widespread normative conflation, a commonly documented tendency to default to normative, or first-order moral considerations, when evaluating questions ostensibly intended to elicit metaethical positions. Taken together, then, there are severe methodological limitations with all three of the studies R&S cite.

The shortcomings in these studies are not limited to just those studies. In my dissertation, I ran numerous tests that likewise assessed how participants interpreted questions about metaethics, and the results were quite similar. In study after study, only a minority (and often a marginal minority) reliably responded to various prompts in ways that indicated they interpreted various metaethical stimuli as intended. Instead, a significant majority of participants would either give responses that made it unclear how they interpreted the question or respond in ways that strongly suggest they did not interpret the question as intended.

R&S thus cite studies that do not support the claims made in their paper; that is, it is not the case that we have convincing empirical evidence that children and adults are disposed towards moral realism. On the contrary, such conclusions would only be sustained by an outdated interpretation of early studies in the literature that never supported such conclusions to begin with. As methods have improved, researchers have instead routinely very high rates of moral antirealism (e.g., Beebe, 2015; Davis, 2021; Pölzler, Tomabechi, & Suzuki, 2023; Pölzler & Wright, 2020). Furthermore, as Moss and I have argued and as I have subsequently supported with a considerable body of data, there are compelling reasons to believe adults (much less children) struggle to interpret questions about metaethics as intended. As Moss and I argued in earlier work:

The relevant metaethical theories are complex and difficult to grasp

Most people are unfamiliar with these distinctions prior to encountering them in studies

Metaethical theories are generally abstract and distant from real world practical questions lay populations would be more familiar with and expect to be asked about

There are typically plausible non-metaethical interpretations of the questions posed to respondents (Bush & Moss, 2020)

The collective effect of these factors lends itself towards skepticism about how people react to stimuli ostensibly designed to elicit their metaethical stances: people will typically reinterpret these questions in some other way, e.g., as a question about their normative moral stance and whether they adopt a negative appraisal of the other person, as an epistemic question about how certain we are of our moral stances and whether others could be justified in holding contrary perspectives, as a question implicitly asking whether we would tolerate people with contrary moral standards, as a descriptive question about whether people hold contrary moral perspectives, whether we regard moral claims as exceptionless or are more sensitive to context, and so on.

None of these alternative, unintended interpretations are speculative; I have gathered extensive empirical data that shows such unintended interpretations are not only common, but often surpass the proportion of people who appear to have interpreted stimuli as intended. For instance, people provide reasonable but unintended accounts of what they take terms like “objective” or “relative” to mean, propose that if someone disagrees with them about a moral issue that they may be thinking of some other context, that statements intended to represent relativism are claims about sensitivity to context or the descriptive claim that different people have different perspectives on what’s morally right or wrong, and that claims intended to represent realism are claims that moral rules have no exceptions. But these are just a few examples of a much broader body of data showing that people struggle, across a range of measures and contexts, to interpret questions about metaethics in the way researchers intend. And if adults do as poorly as the data suggests they do, why should we think children would do any better?

4.0 Why children are probably not moral realists

My critique of the previous studies is intended to establish precedent. What I’ve shown is that the authors of this study cite previous research that purports to establish that both children and adults tend to take an objectivist stance towards morality. This is achieved in all three studies via the disagreement paradigm. But we have good reason to believe the disagreement paradigm is not a valid measure even for adults, much less children, who are much less likely to interpret ambiguous and challenging stimuli as intended.

Note, however, that R&S also specifically say that their findings suggest children “may perceive widely shared moral beliefs as objective in multiple ways,” then cite both earlier studies using the disagreement paradigm and their current paradigm which asks whether God could change the moral rules. Let us now turn our attention to their own methods. Does the fact that most children stated that God could not change (at least some) moral rules provide evidence that those children are moral realists?

It does serve as evidence for this claim. This might sound like a bizarre concession to make in an article critical of this claim. But on strictly Bayesian grounds, if most children said God could change the moral rules, this would be at least some evidence that they think moral truths are contingent, which is more likely to be associated with an antirealist perspective. But the fact that a datapoint provides some evidence does not mean that it provides good evidence or that there aren’t compelling reasons to believe otherwise.

Mere evidence is cheap. The mere fact that many people believe Bigfoot exists and claim to have seen Bigfoot is some evidence that Bigfoot exists, for the simple reason that if Bigfoot existed, it’d be more likely there’d be people claiming to have seen Bigfoot than a world in which nobody did so. Good evidence is expensive. There is no good evidence Bigfoot exists, and plenty of reason to doubt that Bigfoot exists. And if we take into account what we’d expect if Bigfoot existed: more witnesses, high quality video footage, forensic evidence, and so on, it’s more reasonable than not to think Bigfoot doesn’t exist.

A single piece of evidence should always be considered against the backdrop of the surrounding body of evidence. And at present, the overall body of empirical evidence on the matter of whether children or adults are moral realists suggests that we have yet to devise compelling measures for either population and that the rates of unintended interpretation are so high that something more complicated may be going on. My personal hypothesis, one which I’ve supported with data and argued for extensively, is indeterminacy: children and adults are neither realists nor antirealists, but instead have no determinate position. I believe the forced choice design of empirical studies gives the illusory impression children are “realists” when really neither they nor adults typically have any metaethical position on the realism/antirealism dispute.

While the fact that children would say that God can’t change the moral rules is evidence children are moral realists, note that (a) generalizing from the population they were drawn from is going to be a problem and (b) this evidence must be weighed against every other consideration for or against early emerging belief in moral realism. Most importantly, however, these findings provide such weak evidence that children are moral realists that we should have little confidence that the authors have supported the claim that “children’s objectivism regarding widely shared moral claims emerges early and may even persist into adulthood” (Reinecke & Heiphetz Solomon, 2023, p. 8).

This characterization employs the phrase “children’s objectivism,” which helps itself to the presumption that children have an objectivist stance on the matter and that this can be inferred from their response to the paradigm used in this study. This inference is too quick.

4.1 Power relations and social desirability bias

Consider the situation children in these studies are in. Children stand in a different social relation to adults than other adults. Children can be punished if they do the wrong thing, and scolded if they report an attitude towards the operative moral and social norms they are currently subject to. They are more averse to such punishment and scolding, and will often strive to assure the adults around them that they endorse the established norm (even if halfheartedly or by rote, and not genuine commitment). Now these children are presented with familiar moral violations like stomping on someone’s foot and calling someone a mean name. They are then asked whether God could change these rules. Suppose a child says “Yes” to this question. Could this signal that the children themselves may want to commit these actions or aren’t opposed to violating existing moral rules? In other words, could it have implications for the child’s own attitude and conduct? I suspect it very well could, and that children may be reluctant, in the context of being asked about such questions, to express a view towards moral norms that treats them as contingent and liable to change on a whim.

To try to drive home how this might feel from the child’s perspective, imagine you were brought into the royal court of a terrible and powerful king who had the full authority to punish you as they saw fit. You are helpless before their might, and utterly vulnerable to whatever decree they might issue. You are familiar with the king’s rules, and how the king regards them as being of the utmost importance, never to be violated or else. The king’s aides approach you and begin asking you peculiar questions:

If the king said it was okay to steal from the royal treasure, would it be?

If the king said that it was okay to eat at tables reserved for nobility, would it be?

If the king said you may wear the royal colors openly in public, would it be?

These questions would put you in a difficult situation. On the one hand, presumably the king can do whatever he wants. On the other, if you say yes, what will this say about you? Does it signal that you have intentions to steal, ignore noble status, or partake of royal privilege? It might, and so you may interpret the question as one about your commitment to the king’s current, actual decrees, and respond accordingly. Researchers should be mindful that social considerations can and do influence how people respond to questions. One of my own research collaborations provides evidence that people are sensitive to the social implications of their moral judgments, and may respond accordingly (Montealegre et al., 2025) and that reputational stakes are a factor in metaethical evaluations (Moss et al., 2025).

The impact social considerations have on participant responses is not idle speculation. Social desirability bias is a well-documented tendency for people to react to psychological stimuli in ways that systematically depart from an unbiased response due to a motivation to be seen more positively by researchers or whoever else may observe the participant’s response (Piedmont, 2024). Social desirability has been documented among children for decades (e.g., Crandall, Crandall, & Katkovsky, 1965) and studies show social desirability is especially likely when studies are conducted in the form of an interview rather than in a class setting (Miller et al., 2015). Sure enough, R&S’s study employed interviews.

Not only do children have an incentive to depict themselves in a positive light, the power dynamics associated with interacting with adults posing questions about morality are especially relevant to children, who are at risk not only of being perceived negatively but are in an especially vulnerable position where genuine costs can follow from saying the wrong thing. Under these circumstances, expressing a commitment to existing moral norms by insisting God couldn’t change them makes strategic sense, insofar as it signals the child’s own commitment to those moral standards.

There are other reasons to be skeptical of these findings. Children may struggle to engage in the relevant kind of counterfactual thinking, and may have difficulty considering the relevant metaphysical, moral, and other considerations relevant to adequately understanding and addressing a question about God’s capabilities. For instance, do children understand the full implications of what it would mean for God to be all-powerful or all-good? Researchers treating the reaction of children as though children can (even if implicitly) weigh relevant factors about God’s attributes and the nature of morality are presuming a great deal about children’s capacity for understanding the relevant concepts as intended (God, omnimax powers, modal considerations, and so on), the salience of those concepts in influencing children’s judgments, and their competence at suppressing irrelevant biasing factors like social motivations and personal biases. Adults are not very good at any of these virtues, so it’s unclear why we should think children will perform any better.

Take, for instance, a common feature of the way adults may respond to a challenging question: substitution. Substitution occurs when we are presented with a challenging question as input, replace that question with a simpler and more readily answerable question, and then give the response to that other question as an output to the initial question. For instance, when adults are presented with the question:

A bat and a ball together cost $1.10. The bat costs $1 more than the ball. How much does the ball cost?

Instead of performing the math, people have a reflexive, intuitive tendency to judge that the bat is $1.00 and the ball is $0.10, even though this response is incorrect. Studies frequently show failure rates on this question are extremely high, with fewer than 30% of participants getting the correct response in numerous studies (see e.g., Li et al., 2024). One explanation for why this occurs is substitution:

The explanation for the widespread “10 cents” bias in terms of attribute substitution is that people substitute the critical relational “more than” statement by a simpler absolute statement. That is, “the bat costs $1 more than the ball” is read as “the bat costs $1.” Hence, rather than working out the sum, people naturally parse $1.10, into $1 and 10 cents, which is easier to do. In other words, because of the substitution, people give the correct answer to the wrong question. (p. 269)

Adults engage in substitutions even in low-stakes situations and for questions that are far less abstract than the questions posed in R&S’s study, despite far greater linguistic competence and far more emotional and cognitive control than children. Yet in this case we’re dealing with children as young as four. They are far less capable of suppressing biases, interpreting tasks as intended by researchers, or even understanding English. They have greater social incentives for interpreting the study in a more acceptable way and less emotional regulation to suppress those biases. On top of all of this, they are presented with highly abstract questions about theology and metaethics in an unfamiliar context that they are far less prepared for than the typical adult participant. Why should we think they are any less likely than adults to employ heuristics that lead them to systematically interpret questions in unintended ways?

And on top of all that, the measure isn’t even a direct measure of whether they’re realists or not; it’s an indirect measure about a tangentially related theological consideration that at best may imply a metaethical stance, so interpreting these results as evidence children are moral realists requires the further inference that children possess considerable implicit philosophical consistency and sophistication to such an extent that we can plausibly extrapolate from their apparent position on one philosophical matter to their position on another philosophical matter.

This brings me to the main point: we simply don’t know why children are giving these answers. The only way their answers would indicate that they are moral realists is if the reason why they judge that God can’t change the moral rules is because they regard those rules as somehow necessary, eternal, incapable of change, and so on, which, notably, is not identical to stance-independence. One must infer stance-independence from these characteristics, and even these characteristics can only be inferred indirectly; we have no direct evidence that an implicit commitment (or, far less likely, an explicit belief in) any of these qualities is actually driving their judgments about whether God can change moral rules. Since we don’t know what’s causing the judgments, concluding that children are moral realists on the basis of these findings is largely a matter of questionable interpretation of indirect findings.

One reason for thinking they must be moral realists if they give this kind of response is if one assumes that realism would be the only or primary explanation for why they’d offer such a response, assuming they interpreted the question as intended. But if they have the sophistication to interpret the question as intended and the sophistication to adopt complex metaphysical positions like moral realism, it’s not clear why we should ignore the possibility that they might hold other, similarly sophisticated metaethical views, some of which are forms of moral antirealism.

After all, the belief that God can’t change the moral rules is consistent with antirealism. Moral realism is the view that moral truth is stance-independent, while moral antirealism is the view that there are no stance-independent moral truths. That moral rules cannot be changed does not entail that they are stance-independently true. Consider the antirealist position ideal observer theory. According to ideal observer theory, moral truths are determined by those moral beliefs we’d adopt if we were fully informed and ideally rational. Note, though, that under those conditions moral truths still depend on what a hypothetical version of us would endorse. As such, those facts are still stance-dependent, even if one believes all rational agents would converge on the same set of moral truths and that there is therefore only a single correct set of moral standards. People may assume that a metaethical position which holds that there is a single correct set of moral standards, and that those standards don’t even depend on the standards of any actual people or cultures, is thereby a realist system. But this is not the case. Technically, this is an antirealist position.

Do I think children might be ideal observer theorists? No. I present this example to make three points. First, I doubt anyone thinks that children have sophisticated metaethical views. Even if they were moral realists, they aren’t going to be Parfitian realists or Cornell realists or otherwise endorse well-developed and distinctive realist accounts with all the bells and whistles. What they’ll endorse will be some rudimentary analog. Well, there are rudimentary analogs to ideal observer theory. Here’s one: trust smart authority figures. They probably know what’s true, and probably have the best ideas about what to do. God fits the bill here perfectly: God is by far the smartest and most authoritative person. We should defer to God. Such deference doesn’t require one to specifically presume there are stance-independent, irreducibly normative moral facts. Suppose, for instance, God recommended I not go for a walk today, or not eat that piece of cheese in the fridge. I’d listen. Why? I wouldn’t listen simply because I think God is informing me of stance-independent normative truths. I’d assume God knows something about the nonnormative facts that I don’t know: that I’d get hit by a car if I went on that walk, or that the cheese has gone bad and will make me ill. Children may defer to God and further assume that since God already knows what’s best, he’s not going to arbitrarily change his mind on things. And this brings us to another point: if children (or adults, for that matter) were told why God wanted to change the moral rules, and the reason given was sensible, might they change their minds? I suspect so. So another issue here is that the question relies on the presumption that God would change the rules without explanation, on a whim. Even if you were a moral antirealist, you don’t necessarily think God could do this.

Why? Well, part of the reason has to do with God’s other traits. God is good, kind, merciful, and just. If we know God exhibits certain thick moral attributes, it wouldn’t make sense for God to maintain these traits but then insist on rules out of accord with them. Imagine I told you a person was an eternally unchanging and perfectly kind person. Could they choose to be cruel and malicious?

This is a weird question. Maybe they could, but not while maintaining the trait of being an eternally unchanging and perfectly kind person. So to grant that they could is to reject something which has just been stipulated. This is a tall order for a child to wrap their heads around. It might be quite strange for an adult. It’d be a bit like asking if a square can be a circle. Well, you could squish the lines and make it into a circle in one sense, but in another sense, it can’t both be a square and become a circle while still being a square. Likewise, would God even be God if God decided it was okay to punch people or lie or steal? I can see adults having a serious dispute about this matter. But somehow this is supposed to be no problem at all for children.

The second reason I provided the example of ideal observer theory is to provide a concrete alternative that emphasizes the importance of the fact that the study Reinecke and Heiphetz Solomon conducted does not include measures that directly assess whether the participants endorse moral realism or antirealism. Instead, that they endorse it must be at best inferred from their ostensible position on another topic. This creates an inferential gap that should provide considerable grounds for caution: one must presume that the underlying reason why children denied that God could change moral rules was because of an underlying (if implicit) commitment to moral realism. But this was not directly measured or observed; it requires a theoretical interpretation of the reason for the pattern of responses children provided. The possibility of alternative antirealist positions illustrates that there are identifiable reasons why, at least in principle, someone might respond to a question in a way that may seem to best fit one inference about their position, when in fact it not only doesn’t do so, but reflects a commitment to a contrary view. And if anyone is tempted to reject some rudimentary version of ideal observer theory on the grounds that it’s too sophisticated, I will simply serve the same point back to them about moral realism: it’s not clear it’s any less sophisticated, at least in its more robust forms, and it’s not clear which version has the simpler rudimentary analog.

If, in observing children’s responses to these studies, people are inclined to conclude children are implicitly committed to moral realism, we should first consider whether the reasons why they gave the responses in question could be attributed to some other causes.

Those other causes, whatever they might be, are probably not an implicit commitment to ideal observer theory. Instead, we must ask a more fundamental question: Why might children respond to a particular question, once one takes into account how it was asked and the experimental context in which it was asked? While we can make our best guesses as to why we observe a given pattern of results without data, every interpretation of the data will turn on background assumptions and data extraneous to the study itself.

References

Bauman, C. W., McGraw, A. P., Bartels, D. M., & Warren, C. (2014). Revisiting external validity: Concerns about trolley problems and other sacrificial dilemmas in moral psychology. Social and Personality Psychology Compass, 8(9), 536-554. https://doi.org/10.1111/spc3.12131

Beebe, J. R. (2015). The empirical study of folk metaethics. Etyka, 50, 11-28. https://orcid.org/0000-0001-8282-4297

Beebe, J. R., & Sackris, D. (2016). Moral objectivism across the lifespan. Philosophical Psychology, 29(6), 912-929. https://doi.org/10.1080/09515089.2016.1174843

Bush, L. S., & Moss, D. (2020). Misunderstanding metaethics: Difficulties measuring folk objectivism and relativism. Diametros 17(64): 6–21. https://doi.org/10.33392/diam.1495

Crandall, V. C., Crandall, V. J., & Katkovsky, W. (1965). A children’s social desirability questionnaire. Journal of Consulting Psychology, 29(1), 27–36. https://doi.org/10.1037/h0020966

Davis, T. (2021). Beyond objectivism: New methods for studying metaethical intuitions. Philosophical Psychology, 34(1), 125-153. https://doi.org/10.1080/09515089.2020.1845310

De Neys, W., Rossi, S., & Houdé, O. (2013). Bats, balls, and substitution sensitivity: Cognitive misers are no happy fools. Psychonomic Bulletin & Review, 20(2), 269-273. https://doi.org/10.3758/s13423-013-0384-5

Goodwin, G. P., & Darley, J. M. (2008). The psychology of meta-ethics: Exploring objectivism. Cognition, 106(3), 1339-1366. https://doi.org/10.1016/j.cognition.2007.06.007

Heiphetz, L., & Young, L. L. (2017). Can only one person be right? The development of objectivism and social preferences regarding widely shared and controversial moral beliefs. Cognition, 167, 78-90.

Li, Z., Yan, S., Liu, J., Bao, W., & Luo, J. (2024). Does the cognitive reflection test work with Chinese college students? Evidence from a Time-Limited Study. Behavioral Sciences, 14(4), 348. https://doi.org/10.3390/bs14040348

Miller, P. H., Baxter, S. D., Royer, J. A., Hitchcock, D. B., Smith, A. F., Collins, K. L., ... & Finney, C. J. (2015). Children’s social desirability: Effects of test assessment mode. Personality and Individual Differences, 83, 85-90. https://doi.org/10.1016/j.paid.2015.03.039

Montealegre, A., Bush, L. S., Moss, D., Pizarro, D. A., & Jimenez-Leal, W. (2025). Does maximizing good make people look bad? Personality and Social Psychology Bulletin. https://doi.org/10.1177/01461672251361210

Moss, D., Montealegre, A., Bush, L. S., Caviola, L., & Pizarro, D. (2025). Signaling (in) tolerance: Social evaluation and metaethical relativism and objectivism. Cognition, 254, 105984. https://doi.org/10.1016/j.cognition.2024.105984

Piedmont, R. L. (2024). Social desirability bias. In Encyclopedia of quality of life and well-being research (pp. 6526-6526). Cham: Springer International Publishing. https://doi.org/10.1007/978-3-031-17299-1_2746

Pölzler, T. (2017). Revisiting folk moral realism. Review of Philosophy and Psychology, 8(2), 455-476. https://doi.org/10.1007/s13164-016-0300-9

Pölzler, T. (2018). How to measure moral realism. Review of Philosophy and Psychology, 9(3), 647-670. https://doi.org/10.1007/s13164-018-0401-8

Pölzler, T., & Wright, J. C. (2020). Anti-realist pluralism: A new approach to folk metaethics. Review of Philosophy and Psychology, 11(1), 53-82. https://doi.org/10.1007/s13164-019-00447-8

Pölzler, T., Tomabechi, T., & Suzuki, T. (2023, November). Lay people deny morality’s objectivity across cultures (to somewhat different extents and in somewhat different ways) [Preprint]. ResearchGate. https://doi.org/10.13140/RG.2.2.24917.19689

Reinecke, M. G., & Horne, Z. (2018). Immutable morality: Even God could not change some moral facts. Preprint. PsyArXiv. http://dx.doi.org/10.31234/osf.io/yqm48

Reinecke, M. G., & Solomon, L. H. (2023). Children deny that God could change morality. Cognitive Development, 68, 101393. https://doi.org/10.1016/j.cogdev.2023.101393

Sarkissian, H., Park, J., Tien, D., Wright, J. C., & Knobe, J. (2011). Folk moral relativism. Mind & Language, 26(4), 482-505. https://doi.org/10.1111/j.1468-0017.2011.01428.x

Wainryb, C., Shaw, L. A., Langley, M., Cottam, K., & Lewis, R. (2004). Children’s thinking about diversity of belief in the early school years: Judgments of relativism, tolerance, and disagreeing persons. Child Development, 75(3), 687-703. https://doi.org/10.1111/j.1467-8624.2004.00701.x

Westfall, J., Nichols, T. E., & Yarkoni, T. (2017). Fixing the stimulus-as-fixed-effect fallacy in task fMRI. Wellcome Open Research, 1, 23. 10.12688/wellcomeopenres.10298.2